There are 3 different APIs for model evaluation:

1. Estimator score method: Estimator/model object has a ‘score()’ method that provides a default evaluation

2. Scoring parameter: Predefined scoring parameter that can be passed into cross_val_score() method

3. Metric function: Functions defined in the metrics module

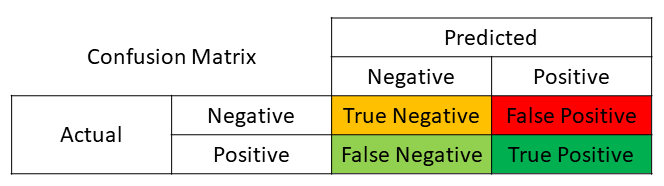

Confusion Matrix is an example of Metric function API.

Confusion matrix can be read as a table where each cell of the table is the number of predictions made by an estimator/algorithm.

Note:

– For the classification problem, we will use the Pima Indians onset of diabetes dataset.

– Estimator/Algorithm: Logistic Regression

– Cross-Validation Split: Train Test Split

This recipe includes the following topics:

- Load data/file from github

- Split columns into the usual feature columns(X) and target column(Y)

- Set set test_size to 33%

- Set seed to reproduce the same random data each time

- Split data using train_test_split()

- Instantiate a classification model (LogisticRegression)

- Call fit() to train model using X_train and Y_train

- Call predict() to use the model for making predictions

- Calculate confusion matrix using confusion_matrix

# import modules

import pandas as pd

import numpy as np

from sklearn.linear_model import LogisticRegression

from sklearn.model_selection import train_test_split

from sklearn.metrics import confusion_matrix

# read data file from github

# dataframe: pimaDf

gitFileURL = 'https://raw.githubusercontent.com/andrewgurung/data-repository/master/pima-indians-diabetes.data.csv'

cols = ['preg', 'plas', 'pres', 'skin', 'test', 'mass', 'pedi', 'age', 'class']

pimaDf = pd.read_csv(gitFileURL, names = cols)

# convert into numpy array for scikit-learn

pimaArr = pimaDf.values

# Let's split columns into the usual feature columns(X) and target column(Y)

# Y represents the target 'class' column whose value is either '0' or '1'

X = pimaArr[:, 0:8]

Y = pimaArr[:, 8]

# set test_size to 33%

test_size = 0.33

# set seed to reproduce the same random data each time

seed = 7

# split data using train_test_split() helper method

X_train, X_test, Y_train, Y_test = train_test_split(X, Y, test_size=test_size, random_state=seed)

# instantiate a classification model

model = LogisticRegression()

# call fit() to train model using X_train and Y_train

model.fit(X_train, Y_train)

# call predict() to use the model for making predictions

predicted = model.predict(X_test)

# calculate confusion matrix

cmatrix = confusion_matrix(Y_test, predicted)

# print confusion matrix

print(cmatrix)

[[141 21]

[ 41 51]]